To CDN or Not to CDN

Published Apr 23, 2026 by Editorial Team

A CDN is useful when geography, traffic volume, or bandwidth cost are part of the problem. It is not an automatic upgrade for every website.

The better question is not "Should modern sites use a CDN?" It is "What problem is the CDN solving here, and is that problem large enough to justify another layer in the request path?"

That distinction matters because the benefits are real, but so are the tradeoffs. For some teams, a CDN removes latency and protects the origin. For others, especially smaller sites with modest traffic, it mostly adds another system to configure, observe, and debug.

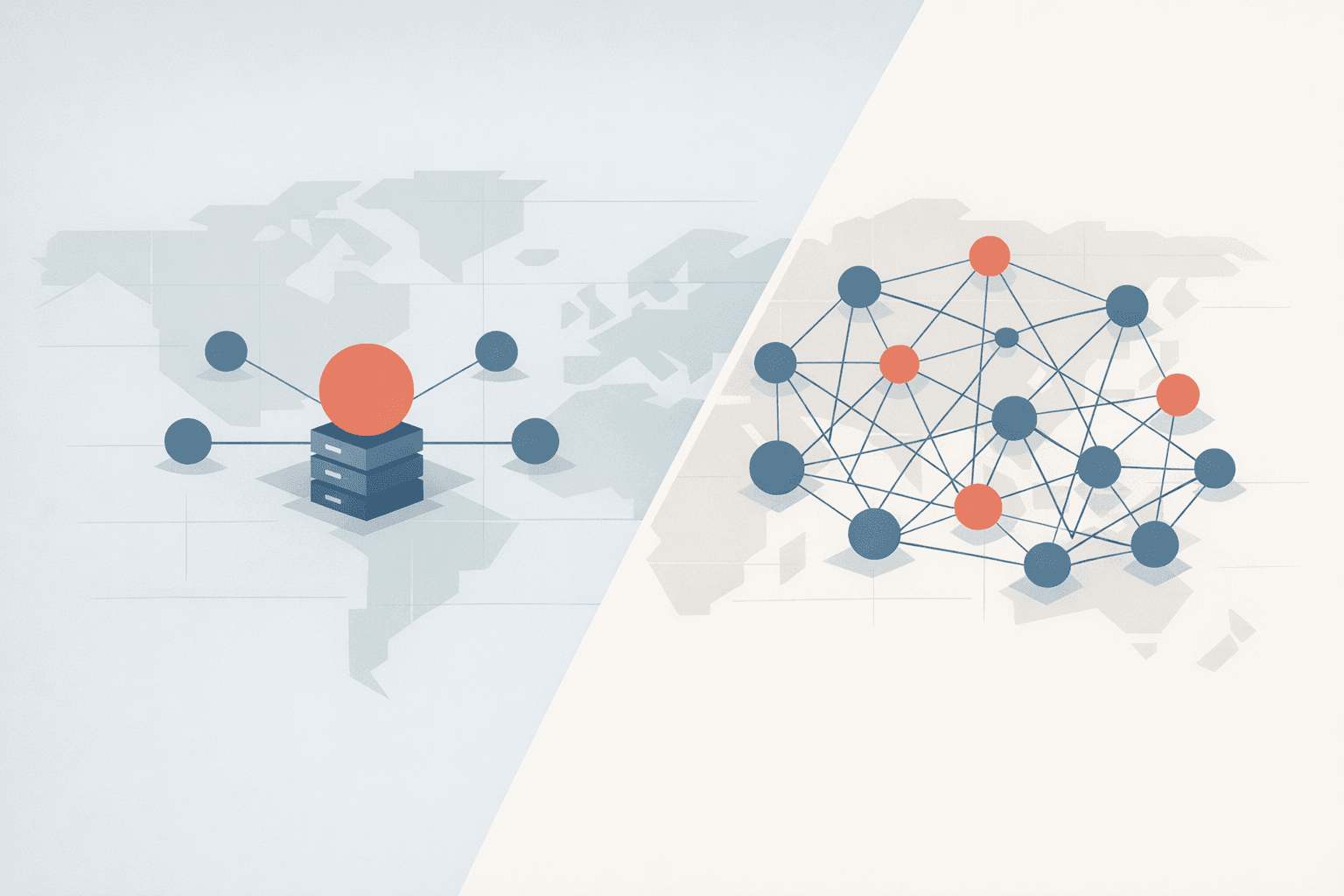

What a CDN actually is

At a high level, a CDN is a distributed caching layer that sits between users and your origin. NIST defines the term at the standards level, and vendor documentation from AWS and Google describes the same basic operating model: content is cached at geographically distributed edge locations so requests can often be served closer to the user instead of going all the way back to the origin server. (NIST glossary: Content Delivery Networks, Amazon CloudFront Developer Guide, Google Cloud CDN docs)

In practice, that usually means:

- the first request may still go back to origin

- later requests can be served from an edge cache

- the origin does less work when the cache hit rate is good

- users who are far from the origin often see lower latency

web.dev's explanation is a useful plain-language version of the same idea: caching content on CDN servers can deliver resources more quickly and reduce load on the origin server. (web.dev: Content delivery networks)

The case for using a CDN

There are several good reasons to put a CDN in front of a site.

1. Lower latency for distributed audiences

The most obvious benefit is distance. If your origin is in one region and your users are spread across countries or continents, serving static assets from edge locations closer to those users can cut travel time noticeably. AWS describes this in straightforward terms: requests are routed to the edge location that provides the lowest latency, which improves performance and time to first byte. Cloudflare describes the same benefit as reducing the physical distance between users and website resources. (Amazon CloudFront Developer Guide, Cloudflare: What is a CDN?)

2. Less origin load and bandwidth pressure

When a CDN serves cached assets, those requests do not hit your origin every time. That can reduce bandwidth consumption and protect the application server from unnecessary work. web.dev explicitly calls out lower origin load as a core caching benefit, and Cloudflare's overview frames reduced origin bandwidth usage as one of the main economic advantages of a CDN model. (web.dev: Content delivery networks, Cloudflare: What is a CDN?)

This matters most when you serve:

- image-heavy marketing pages

- downloadable assets

- video or large media files

- traffic spikes tied to launches, campaigns, or seasonality

3. Better resilience around spikes and edge failure

Because a CDN is distributed, it can absorb more traffic and route around local failures better than a single origin setup on its own. Cloudflare's general CDN documentation points to traffic distribution, failover, and redundancy as core reasons CDNs improve uptime. AWS also notes that serving cacheable content from edge locations can sharply reduce the number of requests reaching the origin during request floods. (Cloudflare: What is a CDN?, AWS: CloudFront and DDoS resiliency)

That does not mean "a CDN solves availability." It means a CDN can be a very helpful part of an availability strategy when the site is important enough for traffic spikes and edge protection to be real concerns.

The case against using a CDN

The downside is not that CDNs are bad. The downside is that they introduce another system with its own rules.

1. Cache invalidation is still operational work

The classic problem has not gone away: if old content is cached at the edge, you need a reliable way to remove or refresh it when the content changes. web.dev is explicit that purging or cache invalidation is critical when you need to retract or update content quickly, including pricing changes and corrections. (web.dev: Content delivery networks)

This is where teams often discover the hidden cost. Once you introduce a CDN, publishing is no longer just "deploy new code." It may also involve:

- cache-control design

- purge strategy

- asset versioning

- debugging stale content reports

For a bigger platform, that is normal operating discipline. For a smaller site, it can be disproportionate overhead.

2. Cache behavior is easy to get subtly wrong

CDN performance depends on configuration quality, not just on turning the service on. web.dev notes that URL shape, query parameters, and request headers affect cache keys and cache hit ratio, and that a properly configured CDN needs the right balance between overly granular caching and insufficiently granular caching. (web.dev: Content delivery networks)

That sounds abstract until it breaks something. A referral parameter, an unexpected query-string order, or the wrong Vary behavior can be enough to fragment the cache or serve the wrong response. This is one reason CDN adoption often grows into broader edge architecture work instead of remaining a simple checkbox.

3. Personalized or highly dynamic pages do not magically become easy to cache

A CDN is strongest when the response can be reused safely. Static assets are the easy case. Personalized dashboards, carts, session-sensitive content, and frequently changing HTML are harder. You can still use a CDN in front of these systems, but the value often shifts from "cache everything" to "cache selective assets and protect the origin where you can." That is a valid architecture, but it is more nuanced than the marketing version of "put it on a CDN and the site gets fast."

4. You add another layer to observe, debug, and govern

Every extra layer adds new failure modes and new places where behavior can diverge from local assumptions. DNS, TLS, edge rules, origin headers, purge events, and platform-specific logging now matter to routine delivery. On some platforms there are also governance implications. Google Cloud's documentation, for example, notes that when Cloud CDN is used, data may be stored at serving locations outside the region or zone of the origin server. (Google Cloud CDN overview)

That does not automatically make a CDN the wrong choice, but it does mean the decision can touch compliance, data-location expectations, and incident response, not just raw page speed.

Smaller sites should be especially skeptical

This is the part teams do not always hear often enough: a CDN can be completely legitimate and still be unnecessary.

From our own delivery experience, CDNs often increase the architecture and complexity of smaller sites that do not have high traffic demand or meaningful bandwidth pressure. If most users are in one region, pages are already lightweight, and the site is not straining the origin, the biggest wins usually come from simpler work first:

- properly sized images

- compression

- browser caching

- fewer third-party scripts

- better application queries

- cleaner hosting and caching headers

In that situation, a CDN may produce a measurable improvement on paper while still being the wrong trade. You take on:

- more moving parts

- more cache-state debugging

- more deployment coordination

- more vendor-specific configuration

without solving the site's main bottleneck.

That is the core argument against defaulting to a CDN: if the site's real problem is slow templates, oversized images, or excess JavaScript, the CDN is adjacent to the problem, not the answer to it.

A better decision rule

A CDN is usually worth serious consideration when:

- users are geographically distributed

- the site serves a lot of cacheable static content

- bandwidth or origin load is becoming expensive

- traffic spikes can overwhelm the origin

- edge security and resilience features are operationally valuable

A CDN is often optional, or at least not the first move, when:

- traffic is modest

- most users are near the origin

- the site is small and easy to serve directly

- most important responses are personalized or dynamic

- the team does not want to carry extra cache and purge complexity yet

Bottom line

The right answer is not "always use a CDN" or "never use a CDN."

A CDN is a strong tool when the site needs lower-latency global delivery, origin offload, or better resilience at the edge. But if the site is still small, traffic is modest, and the origin is not under real pressure, a CDN can add more architecture than value.

For smaller sites, that is usually the deciding factor. If the CDN solves a real scaling or delivery problem, it earns its place. If it mainly adds rules, purges, and one more platform to think about, then it is probably too early.