Websites Built for Humans Only Will Lose to Websites Built for Humans and Agents

Published May 5, 2026 by Editorial Team

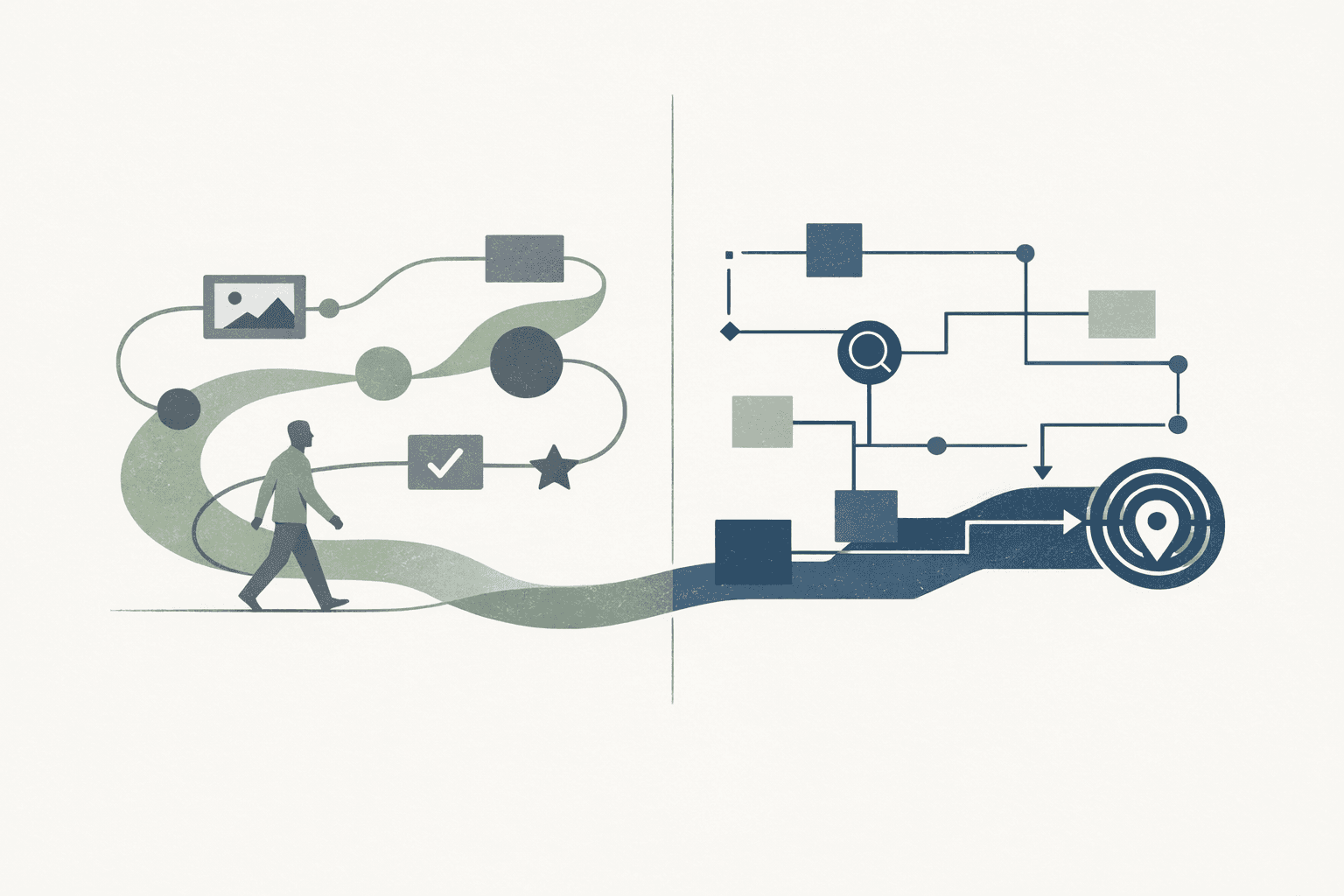

The next generation of winning websites will not be the ones that choose between people and machines.

They will be the ones that serve both.

That distinction matters because the web is no longer mediated only by human eyes moving through menus and landing pages. It is increasingly mediated by systems that search, summarize, compare, extract, route, and sometimes act on behalf of users.

Google's own guidance keeps the baseline clear: the same SEO best practices still apply for AI features in Search, and there are no extra technical requirements or special schema just to appear in AI Overviews or AI Mode. A page still needs to be indexable, useful, and eligible for Search. (Google Search Central: AI features and your website)

That is the baseline.

It is not the whole competitive picture.

Because while Google says you do not need to create special AI files just to appear, the broader web is moving toward environments where agents benefit from sites that are easier to parse, navigate, and act on. Cloudflare's April 17, 2026 agent-readiness launch makes that shift unusually visible: it frames the problem around discoverability, content formats, bot access control, and emerging machine-facing standards, and its first large scan found that support for newer agent standards is still extremely rare. (Cloudflare: Introducing the Agent Readiness score)

The opportunity is obvious.

If most sites are still built as if only humans will navigate them, then sites designed for humans and agents will increasingly have an advantage.

Human-First Still Matters

This is not an argument for replacing people-first design with machine-first design.

Google's people-first content guidance is still the right anchor. Its systems are designed to prioritize helpful, reliable information created to benefit people, not content made primarily to manipulate rankings. It explicitly asks whether content is substantial, original, trustworthy, and written by people who actually know the topic. (Google Search Central: Creating helpful, reliable, people-first content)

So the core standard does not change:

- the content still has to be worth reading

- the page still has to load and function well

- the user still has to trust what they found

- the site still needs a clear purpose and a coherent audience

But that standard now has a second layer.

A site can be good for humans and still be unnecessarily hard for machines to work with.

What "Built for Humans Only" Usually Looks Like

In practice, websites built only for humans tend to have a few recurring traits:

- key meaning is implied visually instead of stated clearly in text

- navigation makes sense in the UI but not in the underlying structure

- important actions are obvious to a person but not described in machine-readable ways

- product or service capabilities exist, but there is no clear public surface that describes how they work

- the site assumes a user will click around patiently instead of expecting a system to locate the right answer or next step quickly

Google indirectly points to the same failure modes in its AI search guidance. It recommends making important content available in textual form, keeping content discoverable through internal links, and ensuring structured data matches visible page content. (Google Search Central: AI features and your website)

Those are not just SEO hygiene rules anymore. They are machine-interpretability rules.

Machine Navigation Is Becoming a Real UX Layer

One of the biggest strategic mistakes teams can make right now is treating agent navigation as a future-only problem.

It is already showing up in fragments:

- AI search systems use multiple retrieval paths and surface supporting links differently from classic search

- coding and research agents browse documentation, product pages, and public tools

- platforms are experimenting with machine-facing standards for authentication, tool discovery, and transactional flows

Google's Search team said in May 2025 that success in its AI search experiences still comes from the same fundamentals: unique value, strong page experience, indexable content, working technical requirements, and visible structured data that matches the page. But that same post also makes the shift clear: AI search creates more complex queries, more follow-up behavior, and more chances for users to land on supporting pages deeper in the site. (Google Search Central Blog: succeeding in AI search)

That means the page is no longer the only unit of usability.

The site itself becomes a navigable knowledge surface.

What Sites Built for Humans and Agents Do Better

The practical difference is not magic. It is clarity.

Sites that work for both humans and agents tend to be better at five things.

1. They Make Meaning Explicit

Agents are better than they used to be at inferring meaning from messy pages.

That does not mean inference is the best operating model.

A stronger site states what it is, what it offers, what each page is about, and how topics relate to one another in plain text and clean structure. That is consistent with Google's advice to make important content available in text and to use structured data responsibly. (Google Search Central: AI features and your website)

If the page only "makes sense when you look at it," then it is under-specified.

2. They Turn Navigation Into a Public System, Not Just a UI

Human visitors can recover from weak navigation by exploring, scrolling, or going back.

Agents are more effective when the site itself exposes a clean graph of where information lives.

That means:

- strong internal linking

- stable URLs

- crawlable discovery paths

- fewer dead-end templates

- less reliance on hidden state and ambiguous interaction patterns

Sites that maintain those basics are easier for search systems to index, easier for assistants to cite, and easier for any automated system to traverse without guesswork.

3. They Describe Actions, Not Just Pages

This is where many sites are still behind.

Humans can look at a page and infer that "book a demo," "search inventory," "request a quote," or "check availability" are meaningful next steps.

Machines benefit when those actions are described more explicitly.

Schema.org's Action model exists precisely to describe capabilities that can be performed, including potential actions and the entry points used to execute them. The documentation describes potentialAction as a way to represent actions available on a thing, and EntryPoint as a way to specify the target, HTTP method, MIME types, and URL template for carrying out that action. (Schema.org Actions)

Not every business needs to model every user flow this way.

But the broader point is important: the sites that expose structured actions will be easier for machines to understand than the sites that merely imply those actions through buttons and layout.

4. They Publish API-Adjacent Discoverability Surfaces

If your business exposes services, data, workflows, or integrations, then discoverability should not stop at the marketing site.

The OpenAPI Initiative describes OAS as an open standard for describing APIs, and says a properly defined OpenAPI description allows both humans and computers to discover and understand a service's capabilities without needing source code, extra documentation, or traffic inspection. (OpenAPI Initiative: What is OpenAPI?)

That is the right mental model for API-adjacent discoverability.

A machine-capable site does not force every system to reverse-engineer its service model from screenshots, blog prose, or form labels. It exposes clear technical artifacts where appropriate:

- public API descriptions

- developer docs that are easy to traverse

- stable endpoints and predictable response shapes

- documentation pages that explain capabilities in plain language

Even if your primary buyer is still human, the system helping that buyer compare vendors may not be.

5. They Treat Tool and Agent Access as a Product Surface

Some businesses will go further than static discoverability and expose actual tool entry points.

The Model Context Protocol defines a standard JSON-RPC-based way for clients and servers to exchange tools, prompts, resources, and related capabilities, including authorization guidance for HTTP transport. (Model Context Protocol: Overview)

Not every website needs an MCP server.

But some do need the mindset behind it: if a machine should be able to inspect, query, or act through your system, expose that deliberately instead of leaving it as an accidental side effect of the UI.

That is what separates "a website with some forms" from "a service that can participate in agent workflows."

This Does Not Mean Chasing Every Emerging Standard

There is an easy way to do this badly.

Teams hear "agent-ready" and start scattering experimental files, vague manifests, and half-maintained machine surfaces across the stack without improving the basics.

That is not strategy. It is theater.

Google's guidance is valuable precisely because it keeps the floor stable: indexable content, technical accessibility, page quality, internal links, and structured data that matches the visible page all still matter first. (Google Search Central: AI features and your website, Google Search Central Blog: succeeding in AI search)

The stronger approach is layered:

- keep the human experience strong

- make meaning and navigation more explicit

- expose actions where they are real and stable

- publish machine-readable service descriptions where the business supports them

- add deeper agent-facing surfaces only when they solve a real workflow

That is much more defensible than pretending every company suddenly needs to implement every new agent protocol.

Why This Will Become a Competitive Gap

Cloudflare's early adoption data suggests the web is still at the beginning of this transition. In its April 2026 scan, markdown negotiation passed on only 3.9% of sites, content-signal declarations were rare, and MCP Server Cards plus API Catalogs appeared on fewer than 15 sites in the scanned dataset. (Cloudflare: Introducing the Agent Readiness score)

Early transitions like that do not stay early forever.

Once more buyers, assistants, and software agents begin expecting machine-navigable websites, the organizations that invested early in explicit structure will have an easier time being discovered, interpreted, and selected.

The advantage will not come from abandoning human-centered design.

It will come from extending it.

Bottom Line

Websites built only for humans will increasingly lose to websites built for humans and agents.

Not because people stopped mattering.

Because machine mediation now matters too.

The winning sites will still be useful, credible, and satisfying for human visitors. They will simply also be easier for machines to:

- find

- parse

- navigate

- verify

- and act on

That is the real shift.

The future is not machine-first websites.

It is human-centered websites with machine-grade clarity.